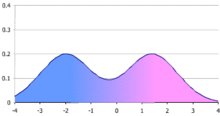

In statistics, a bimodal distribution is a continuous probability distribution with two different modes. These appear as distinct peaks (local maxima) in the probability density function, as shown in Figure 1.

More generally, a multimodal distribution is a continuous probability distribution with two or more modes, as illustrated in Figure 3.

Terminology

When the two modes are unequal the larger mode is known as the major mode and the other as the minor mode. The least frequent value between the modes is known as the antimode. The difference between the major and minor modes is known as the amplitude. In time series the major mode is called the acrophase and the antimode the batiphase.

Gatlung's classification

Gatling introduced a classification system (AJUS) for distributions[2]

- A: unimodal distribution - peak in the middle

- J: unimodal - peak at either end

- U: bimodal - peaks at both ends

- S: bimodal or multimodal - multiple peaks

This classification has since been modified slightly:

- J (modified) - peak on right

- L: unimodal - peak on left

- F: no peak (flat)

Under this classification bimodal distributions are classified as type S or U.

Examples

Occurrences in nature

Examples of variables with bimodal distributions include the time between eruptions of certain geysers, the color of galaxies, the size of worker weaver ants, the age of incidence of Hodgkin's lymphoma, use of diapers (Kiri Horsey-Shepherd), the speed of inactivation of the drug isoniazid in US adults, the absolute magnitude of novae, and the circadian activity patterns of those crepuscular animals that are active both in morning and evening twilight. In fishery science multimodal length distributions reflect the different year classes and can thus be used for age distribution- and growth estimates of the fish population[3] Sediments are usually distributed in a bimodal fashion.

Mathematical distributions

Important bimodal distributions include the arcsine distribution and the beta distribution. Another bimodal distribution is the U-quadratic distribution.

The ratio of two normal distributions is also bimodally distributed. Let

where a and b are constant and x and y are distributed as normal variables with a mean of 0 and a standard deviation of 1. R has a known density that can be expressed as a confluent hypergeometric function.[4]

The distribution of the reciprocal of a t distributed random variable is bimodal when the degrees of freedom are more than one. Similarly the reciprocal of a normally distributed variable is also bimodally distributed.

Origins

A bimodal distribution most commonly arises as a mixture of two different unimodal distributions (i.e. distributions having only one mode). In other words, the bimodally distributed random variable X is defined as with probability or with probability where Y and Z are unimodal random variables and is a mixture coefficient. For example, the bimodal distribution of sizes of weaver ant workers shown in Figure 2 arises due to existence of two distinct classes of workers, namely major workers and minor workers.[1] In this case, Y would be the size of a random major worker, Z the size of a random minor worker, and α the proportion of worker weaver ants that are major workers.

Mixtures with two distinct components need not be bimodal, and two component mixtures of unimodal component densities can have more than two modes. Therefore, there is no immediate connection between the number of components in a mixture and the number of modes of the resulting density.

Mixture of two normal distributions

A mixture of two normal distributions has five parameters to estimate: the two means, the two variances and the mixing parameter. A mixture of two normal distributions with equal standard deviations is bimodal only if their means differ by at least twice the common standard deviation.[5] Estimates of the parameters is simplified if the variances can be assumed to be equal (the homoscedastic case).

It is obvious that if the means of the two normal distributions are equal that the combined distribution is unimodal. Conditions for unimodality of the combined distribution were derived by Eisenberger.[6] Necessary and sufficient conditions for a mixture of normal distributions to be bimodal have been identified by Ray and Lindsay.[7]

Mixtures of other distributions require additional parameters to be estimated.

Properties

A mixture of two unimodal distributions with differing means is not necessarily bimodal. The combined distribution of heights of men and women is sometimes used as an example of a bimodal distribution, but in fact the difference in mean heights of men and women is too small relative to their standard deviations to produce bimodality.[5]

Bimodal distributions have the peculiar property that - unlike the unimodal distributions - the mean may be a more robust sample estimator than the median.[8] This is clearly the case when the distribution is U shaped like the arcsine distribution. It may not be true when the distribution has one or more long tails.

Moments of mixtures

Let

where gi is a probability distribution and p is the mixing parameter.

The moments of f(x) are[9]

where

and Si and Ki are the skewness and kurtosis of the ith distribution.

Summary statistics

Bimodal distributions are a commonly used example of how summary statistics such as the mean, median, and standard deviation can be deceptive when used on an arbitrary distribution. For example, in the distribution in Figure 1, the mean and median would be about zero, even though zero is not a typical value. The standard deviation is also larger than deviation of each normal distribution.

Although several have been suggested, there is no presently generally agreed summary statistic (or set of statistics) to quantify the parameters of a general bimodal distribution. For a mixture of two normal distributions the mean and standard deviation along with the mixing parameter (a weighing system for the combination) are usually used - a total of five parameters.

Ashman's D

A statistic that may be useful is Ashman's D:[10]

where μ1, μ2 are the means and σ1 σ2 are the standard deviations.

For a mixture of two normal distributions D > 2 is required for a clean separation of the distributions.

Bimodality index

The bimodality index assumes that the distribution is a sum of two normal distributions with equal variances but differing means.[11] It is defined as follow:

where μ1, μ2 are the means and σ is the common standard deviation.

where p is the mixing parameter.

Bimodality coefficient

Sarle's bimodality coefficient b is[12]

where γ is the skewness and κ is the kurtosis. The kurtosis is here defined to be the standardised fourth moment around the mean. The value of b lies between 0 and 1.[13] The logic behind this coefficient is that a bimodal distribution will have very low kurtosis, an asymmetric character, or both - all of which increase this coefficient.

The formula for a finite sample is[14]

where n is the number of items in the sample, g is the sample skewness and k is the sample excess kurtosis.

The value of b for the uniform distribution is 5/9. This is also its value for the exponential distribution. Values greater than 5/9 may indicate a bimodal or multimodal distribution. The maximum value (1.0) is reached only by a Bernoulli distribution with only two distinct values or the sum of two different Dirac delta functions.

The distribution of this statistic is unknown. It is related to a statistic proposed earlier by Pearson - the difference between the kurtosis and the square of the skewness (vide infra).

Statistical tests

A study of a mixture density of two normal distributions data found that separation into the two normal distributions was difficult unless the means were separated by 4-6 standard deviations.[15]

Unimodal vs. bimodal distribution

A necessary but not sufficient condition for a symmetrical distribution to be bimodal is that the kurtosis be less than three.[16][17] Here the kurtosis is defined to be the standardised fourth moment around the mean. The reference given prefers to use the excess kurtosis - the kurtosis less 3.

Pearson in 1894 was the first to devise a procedure to test whether a distribution could be resolved into two normal distributions.[18] This method required the solution of a ninth order polynomial. In a subsequent paper Pearson reported that for any distribution skewness2 + 1 < kurtosis.[13] Later Pearson showed that[19]

where b2 is the kurtosis and b1 is the square of the skewness. Equality holds only for the two point Bernoulli distribution or the sum of two different Dirac delta functions. These are the most extreme cases of bimodality possible. The kurtosis in both these cases is 1. Since they are both symmetrical their skewness is 0 and the difference is 1.

Baker proposed a transformation to convert a bimodal to a unimodal distribution.[20]

Several tests of unimodality versus bimodality have been proposed: Haldane suggested one based on second central differences.[21] Larkin later introduced a test based on the F test;[22] Benett created one based on the G test.[23] Tokeshi has proposed fourth test.[24][25] A test based on a likelihood ratio has been proposed by Holzmann and Vollmer.[26]

Antimode

Statistical tests for the antimode are known.[27]

General tests

To test if a distribution is other than unimodal, several additional tests have been devised: the bandwidth test,[28] the dip test,[29] the excess mass test,[30] the MAP test,[31] the mode existence test,[32] the runt test,[33][34] the span test,[35] and the saddle test.

The dip test is available for use in R.[1] The values for the dip statistic values range between 0 to 1. Values less than 0.05 indicating significant bimodality and values greater than 0.05 but less than 0.10 suggesting bimodality with marginal significance.

Beta-normal distribution

This distribution is bimodal for certain values of is parameters. A test for these values has been described.[36]

Graphical methods

In the study of sediments particle size is frequently bimodal. Empirically it has been found useful to plot the frequency against the log( size ) of the particles. This usually gives a clear separation of the particles into a bimodal distribution.

An alternative method is to plot the log of the particle size against the cumulative frequency. This graph will usually consist two reasonably straight lines with a connecting line corresponding to the antimode.

Silverman's test

Silverman introduced a bootstrap method for the number of modes.[28] The test uses a fixed bandwidth which reduces the power of the test and its interpretability. Undersmoothed densities may have an excessive number of modes whose count during bootstrapping is unstable.

Parameter estimation and fitting curves

Assuming that the distribution is known to be bimodal or has been shown to be bimodal by one or more of the test above, it is frequently desirable to fit a curve to the data. This may be difficult.

Bayesian methods may be useful in difficult cases.

Software

- Two normal distributions

A package for R is available for testing for bimodality.[2] This package assumes that the data are distributed as a sum of two normal distributions. If this assumption is not correct the results may not be reliable. It also includes functions for fitting a sum of two normal distributions to the data.

Assuming that the distribution is a mixture of two normal distributions then the expectation-maximization algorithm may be used to determine the parameters. Several programmes are available for this including Cluster.[37]

- Other distributions

The mixtools package also available for R can test for and estimate the parameters of a number of different distributions.[38]

Another package for a mixture of two right tailed gamma distributions is available.[39]

Two other packages for R are available to fit mixture models: flexmix[40] and mcclust.[41]

The statistical programme SAS can also fit a variety of mixed distributions with the command PROCFREQ.

See also

References

- ^ a b Weber, NA (1946). "Dimorphism in the African Oecophylla worker and an anomaly (Hym.: Formicidae)" (PDF). Annals of the Entomological Society of America. 39: pp. 7–10.

((cite journal)):|pages=has extra text (help) - ^ Galtung J (1969) Theory and methods of social research. Universitetsforlaget, Oslo ISBN 0043000177

- ^ Introduction to tropical fish stock assessment

- ^ Fieller E (1932) The distribution of the index in a noraml bivariate population. Biometrika (24):428-440

- ^ a b Schilling, Mark F.; Watkins, Ann E.; Watkins, William (2002). "Is Human Height Bimodal?". The American Statistician. 56 (3): 223–229. doi:10.1198/00031300265.

- ^ Eisenberger I (1964) Genesis of bimodal distributions. Technometrics 6 (4) 357-363

- ^ Ray S, Lindsay BG (2005) The topography of multivariate normal mixtures. Ann Stat 33 (5) 2042-2065

- ^ Mosteller F, Tukey JW (1977) Data analysis and regression: a second course in statistics. Reading, Mass, Addison-Wesley Pub Co

- ^ Kim T-H, White H (2003) On more robust estimation of skewness and kurtosis: Simulation and application to the S & P 500 index

- ^ Ashman KM, Bird CM, Zepf SE(1994) Astronomical J 108: 2348

- ^ Wang J, Wen S, Symmans WF, Pusztai L, Coombes KR (2009) The bimodality index: a criterion for discovering and ranking bimodal signatures from cancer gene expression profiling data. Cancer Inform 7:199-216

- ^ Ellision AM (1987) Effect of seed dimorphism on the density-dependent dynamics of experimental populations of Atriplex triangularis (Chenopodiaceae). Am J Botany 74(8): 1280-1288

- ^ a b Pearson K (1916) Mathematical contributions to the theory of evolution, XIX: Second supplement to a memoir on skew variation. Phil Trans Roy Soc London. Series A 216 (538–548): 429–457. Bibcode 1916RSPTA.216..429P. doi:10.1098/rsta.1916.0009. JSTOR 91092

- ^ SAS Institute Inc. (2012). SAS/STAT 12.1 user’s guide. Cary, NC: Author.

- ^ Jackson PR, Tucker GT, Woods HF (1989) Testing for bimodality in frequency distributions of data suggesting polymorphisms of drug metabolism--hypothesis testing. Br J Clin Pharmacol 28(6) 655–662

- ^ Gneddin OY(2010) Quantifying Bimodality.

- ^ Muratov AL, Gnedin OY (2010) Modeling the metallicity distribution of globular clusters. Ap J (submitted) arXiv:1002.1325

- ^ Pearson K (1894) Contributions to the mathematical theory of evolution: On the dissection of asymmetrical frequency-curves. Phil Trans Roy Soc Series A, Part 1, 185: 71-90

- ^ Pearson K (1929) Editorial note. Biometrika 21: 370-375

- ^ Baker GA (1930) Transformations of bimodal distributions. Ann Math Stat 1 (4) 334-344

- ^ Haldane JBS (1951) Simple tests for bimodality and bitangentiality. Ann Eugenics 16 (1) 359–364 DOI: 10.1111/j.1469-1809.1951.tb02488.x

- ^ Larkin RP (1979) An algorithm for assessing bimodality vs. unimodality in a univariate distribution. Behavior Research Methods 11 (4) 467-468 DOI: 10.3758/BF03205709

- ^ Bennett SC (1992) Sexual dimorphism of Pteranodon and other pterosaurs, with comments on cranial crests. J Vert Paleont 12 (4) 422-434

- ^ Tokeshi M (1992) Dynamics and distribution in animal communities; theory and analysis. Researches in Population Ecology 34:249–273

- ^ Barreto S, Borges PAV, Guo Q (2003) A typing error in Tokeshi’s test of bimodality. Global Ecology & Biogeography 12: 173–174

- ^ Holzmann H, Vollmer S (2008) A likelihood ratio test for bimodality in two-component mixtures – with application to regional income distribution in the EU. Advances in Statistical Analysis 92 (1) 57-69

- ^ Hartigan JA (2000) Testing for antimodes. Studies in Classification, Data Analysis, and Knowledge Organization 169-181

- ^ a b Silverman BW (1981) Using kernel density estimates to investigate multimodality. J Roy Statist Soc Ser B 43:97-99 Cite error: The named reference "Silverman1981" was defined multiple times with different content (see the help page).

- ^ Hartigan JA, Hartigan PM (1985) The dip test of unimodality. Ann Statist 13 (1) 70-84

- ^ Mueller DW, Sawitzki G (1991) Excess mass estimates and tests for multimodality. JASA 86, 738 -746

- ^ Rozál GPM Hartigan JA (1994) The MAP test for multimodality. J Classification 11 (1) 5-36 DOI: 10.1007/BF01201021

- ^ Minnotte MC (1997) Nonparametric testing of the existence of modes. Ann Statist 25 (4) 1646-1660

- ^ Hartigan JA, Mohanty S (1992) The RUNT test for multimodality. J Classifcation 9: 63-70

- ^ Andrushkiw RI, Klyushin DD, Petunin YI (2008) Theory Stoch Processes 14 (1) 1-6

- ^ Hartigan JA (1988) The span test of multimodality

- ^ http://www.amstat.org/sections/srms/Proceedings/y2002/Files/JSM2002-000150.pdf

- ^ https://engineering.purdue.edu/~bouman/software/cluster/

- ^ http://cran.r-project.org/web/packages/mixtools/index.html

- ^ http://cran.r-project.org/web/packages/discrimARTs/discrimARTs.pdf

- ^ http://cran.r-project.org/web/packages/flexmix/index.html

- ^ http://cran.r-project.org/web/packages/mclust/index.html

![{\displaystyle \nu _{2}=p[\sigma _{1}^{2}+\delta _{1}^{2}]+(1-p)[\sigma _{2}^{2}+\delta _{2}^{2}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/fd347d2f8b3d56aabc3d31c665a87d7194243ca7)

![{\displaystyle \nu _{3}=p[S_{1}\sigma _{1}^{3}+3\delta _{1}\sigma _{1}^{2}+\delta _{1}^{3}]+(1-p)[S_{2}\sigma _{2}^{3}+3\delta _{2}\sigma _{2}^{2}+\delta _{2}^{3}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a4fd23eab0e3bd3597aa37e75a91d0e19fb3516a)

![{\displaystyle \nu _{4}=p[K_{1}\sigma _{1}^{4}+4S_{1}\delta _{1}\sigma _{1}^{3}+6\delta _{1}^{2}\sigma _{1}^{2}+\delta _{1}^{4}]+(1-p)[K_{2}\sigma _{2}^{4}+4S_{2}\delta _{2}\sigma _{2}^{3}+6\delta _{2}^{2}\sigma _{2}^{2}+\delta _{2}^{4}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/493aa3991ef31a6b20174271fdefb9840b42644a)